AI in Lease Accounting: What Auditors Need to Know

Every vendor claims 'AI-powered.' The critical question is whether AI calculates or advises. Here's how to evaluate AI claims, and why the distinction matters for audit assurance.

An auditor recalculates a 60-month office lease at month 1. Pen and paper, IFRS 16’s prescribed method. Their interest expense lands within a few cents of the client’s system. Three cents, in this case.

Three cents is a small number, but it carries a real question. Did the system produce that figure because it’s the only figure those inputs can produce, or because it’s the figure the system happened to produce today? How that gets resolved decides how much substantive testing the rest of the audit needs.

The architectural question

When a vendor says “AI-powered,” there’s one thing worth asking before anything else. Is the AI computing the journal entry, or is it sitting beside the engine that does?

Most lease accounting tools today fall into the second pattern. A deterministic engine, built from coded logic, fixed formulas, and integer-cent arithmetic, produces the present values, schedules, and journals. AI helps with the messy human parts: setup, explanation, drafting rate memos, flagging odd inputs. The two systems run on separate rails.

A smaller number of tools blur the line and let a model produce or adjust the calculation outputs themselves. That becomes a problem the moment those outputs land in the financial statements. A language model can return slightly different numbers for the same prompt depending on its version, its context window, sometimes just the order of inputs. Reproducibility breaks. So does the work an auditor does under ISA 540 (Revised), which requires evaluating the method, assumptions and data behind every accounting estimate (lease term, discount rate, modification judgements) and, where the assessed risk is high enough, building an independent point estimate. That gets much harder when the “method” is a prompt.

If the system can’t produce the same number twice, the audit approach has to compensate.

What AI is good at

The advisory work is where most of the time saving shows up. A lot of lease data entry is judgement dressed up as data entry. Is the right-of-use period the signing date or the commencement date? Is a CPI-linked payment part of the liability, or expensed as it arises? Is this change a modification or a remeasurement? Zen AI sits over the input forms in LeaseAccounting.app and explains, in context, what the standard says about each choice and what it will do downstream. Most input errors get caught at the point they’re made.

Once a calculation runs, the same assistant can read the output and explain it: what drove this month’s interest, why the ROU asset stepped down by that exact amount, how a modification absorbed into the carrying value. The explanation is generated from the deterministic result, not in place of it, so it can never disagree with the schedule.

Discount rates are where this matters most. Under IFRS 16, the lessee uses an incremental borrowing rate when the implicit rate isn’t determinable. Under FRS 102, the lessee can choose, lease by lease, between an IBR and an obtainable borrowing rate (the OBR, unique to FRS 102). Either route takes reference-rate data, a credit-spread judgement, and a written rationale. Discount Rate Advisor pulls reference rates from central-bank sources (ECB, Bank of England, Riksbank, Norges Bank) and drafts the rate memo. The draft is exactly that. The user reviews it, edits it, approves it. The approved rate is what flows into the engine. The model never sets the rate.

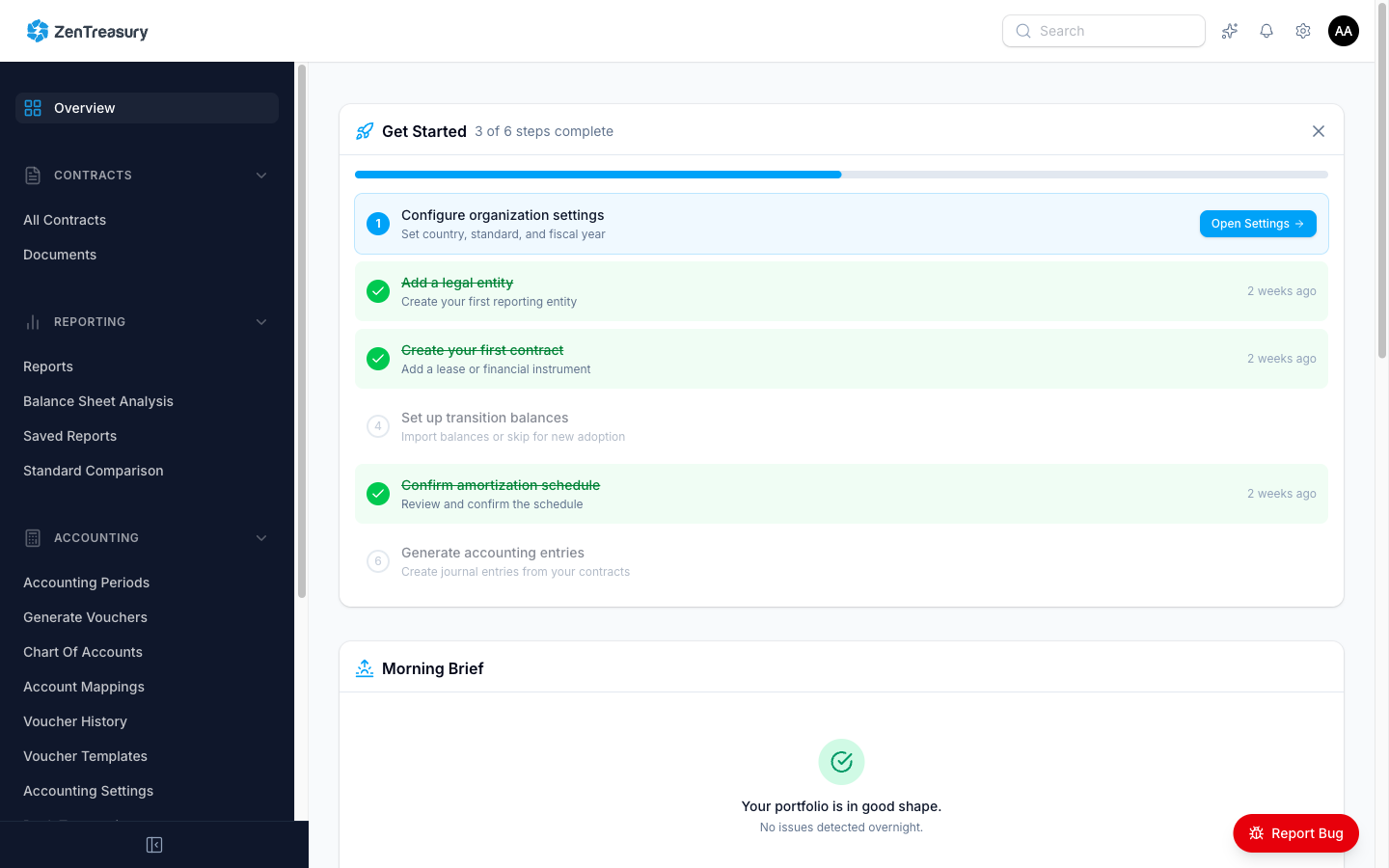

The same pattern shows up elsewhere. Portfolio-wide checks catch inputs the engine would otherwise process without question: a lease term entered as 480 months instead of 48, an indexation entered as 2.5 instead of 0.025, a commencement date five years before the company existed. A daily Morning Brief surfaces compliance items that need attention, such as indexations due, expiring leases needing terminal entries, missing rate documentation, and modifications that haven’t been actioned. None of this touches a number. It tells the user which numbers might be wrong.

What the engine handles

The calculation engine handles present value, amortisation, depreciation, interest accrual, modification recalculations, and FX retranslation. It is conventional software: reproducible, inspectable, and validated against the IASB’s IFRS 16 Illustrative Examples, the FRS 102 §20 worked examples, and the ASC 842 illustrative examples. Over 50 golden tests at last count, run on every commit. Same inputs, same outputs, every time, regardless of whether the AI layer is on, off, or removed entirely.

There is no model in the calculation path. If Zen AI were deleted tomorrow, every schedule and every journal would still produce the same numbers.

The boundary is policy as well as architecture. Customer data is not used to train AI models. Document content sent to our AI provider is deleted within 24 hours of session completion. Structured identifiers like IBAN, card numbers, email addresses, and phone numbers are stripped from prompts before they leave our infrastructure. Those commitments are documented on the security page.

That separation is what lets an auditor work the way ISA 540 expects. Pick a sample, recalculate under the standard’s prescribed method, reconcile to the system output to the cent. Where it doesn’t reconcile, the difference is in an input or a judgement, not in something the model decided to do this morning.

What to verify

A few things are worth doing on any engagement where the client’s lease tool advertises AI.

Start by getting the architecture in writing. The boundary between advisory AI and the calculation engine should exist somewhere the client can show you, not just in marketing copy. “AI-powered calculations” with no further detail is a flag.

Then run the same lease twice. This is the cheapest test there is, and it’s decisive: pick five leases, recalculate them under your own assumptions, compare to the system output, then re-run the same calculation a week later. If the cents drift between runs, something non-deterministic is in the path.

Check the audit trail on AI-drafted artefacts (rate memos, setup decisions, modification narratives). The trail should show the draft, the human review, and the approval as separate events with separate timestamps.

Use the auditor portal directly. Vault gives auditors period-locked access to the lease register, schedules, journals, and evidence packs without exporting anything by email. The activity log shows what was opened and when.

Walk the evidence pack end to end: lease register, rate memos, calculation schedules, journals, rollforward, judgement documentation, maturity analysis. Anything model-drafted should be visible as such; everything calculated should reconcile to your independent recalculation.

What it means for the audit

When the calculation engine is deterministic and the AI stays advisory with human approval at every output, the audit becomes the same audit you’d run on any well-controlled system, just faster, because the inputs and the rationale are documented before you arrive. When those conditions don’t hold, you’re back to substantive testing on a black box.

The three-cent difference from the opening is almost always a rounding convention. The only reason that’s a comfortable answer is that the engine produces the same answer twice.

Further reading

- Why Spreadsheets Fail for Lease Accounting: the structural failure points auditors flag, from modification chains to evidence packs

- FRS 102 Section 20: What UK SMEs Need to Know Before December 2026: the on-balance-sheet transition and what to do this year

LeaseAccounting.app keeps AI advisory and deterministic calculations on separate rails, so your auditor can verify every number independently. Start free with 2 leases, or invite your auditor to Vault for structured evidence access.